-

Lattice simulation is an important systematic method to solve strongly correlating and interacting systems in the framework of quantum field theory at finite temperatures [1, 2]. By sampling numerous configurations according to the action, physical observables are computed by ensemble averaging. The background physical mechanism can only be explored by certain integral quantities because of the large amount of configurations and unavoidable fluctuations during the sampling process [3, 4]. To test or realize a certain physical mechanism, different effective models have been developed. Such models are built with specific effective degrees of freedom (d.o.f) and by introducing proper interactions between them [5]. Starting from the fundamental theory, building an effective model is not usually straightforward because of the difficulty of choosing the key d.o.f and including the corresponding interactions. There are several successful examples, such as the Cooper pair for superconductivity, the vortex for the XY model, and various soliton solutions for quantum chromodynamics (QCD) [6−11]. Most of such key d.o.f are obtained by solving semi-classical equations. To complete the effective model, the fluctuations about the chosen d.o.f must be integrated out properly, which is usually a difficult and tedious task.

From the viewpoint of functional integration of quantum field theory, the Lagrangian density is encoded in the distribution of the configuration set [12]. The emerging probability of each configuration is determined by its action. If the correctly distributed ensemble is known, for example by coarse-graining the fundamental lattice configuration into the chosen effective one, the action can be extracted by estimating the probability of the configuration [13], which is an almost impossible task [14, 15] using traditional methods for such a high dimensional distribution. However, popular deep learning frameworks recently proposed are suitable for solving such problems [16−19]. Based on the variational ansatz that decomposes the probability of a lattice configuration into a conditional probability product, a class of autogressive networks has been developed for probability estimation issues [20−22]. For classical systems, such frameworks have already been introduced to study underlying interaction details of condensed matter, chemistry, and biology systems [23−26]. Although some attempts have been reported to learn the action in quantum lattice field theories [27, 29, 30], research on estimations based on external parameters, e.g., temperature dependence, remains notably scarce.

The imaginary-time thermal field theory reformulates quantum statistics by compacting the time direction onto an imaginary-time ring [31]. As a result, the complex weight

$ \exp(i S) $ of a configuration becomes a real probability$ \exp(-S) $ . If the integral is discretized over imaginary time, it can be found that the temperature dependences of kinetic and potential parts are different but explicit [32, 33]. This implies that following basically the same procedure as that in the classical case can work for quantum ones, except that more than one ensemble is needed to determine the whole phase diagram along the temperature axis. In this paper, we briefly review the classical case to clarify the paradigm of constructing an effective model with artificial neural networks (ANNs). Then, the quantum version is discussed. We show that if the potential part is independent on the imaginary time, only two ensembles at different temperature are enough to determine the action of each configuration if the Lagrangian density is composed by the sum of kinetic and local potential terms. We use an example of quantum mechanics (0+1D field theory) to show the application of the suggested procedure and adopt continuous-mixture autoregressive networks (CANs) to estimate the probability [25]. Numerical experiments demonstrate that to predict the action of one sample at a certain temperature, only two different ensembles suffice. In the interpolation case, i.e., when the predicted temperature falls in the range of two known temperatures, the proposed approach is optimal. This is acceptable because, when the phase structure is approximately characterized at two ends by an effective model, its estimation for intermediate states should not deviate too much from the correct value. -

In classical statistics, the probability of one sample in a physical ensemble is determined by the energy of the sample, which is given by the Hamiltonian H of the model. Taking a spin system as an example, a configuration is represented as a set of spin values at every site as

$ \sigma=\{s_1, s_2, ..., s_N\} $ , and the thermodynamic properties of the system at a certain temperature T are governed by the ensemble whose samples are distributed according to the Hamiltonian$ H(\sigma) $ following the Boltzmann distribution:$ P(\sigma)\propto\exp(-H(\sigma)/T). $

(1) Once the Hamiltonian of the system is known, one can obtain the ensemble by applying certain sampling methods, such as Markov chain Monte Carlo (MCMC). In this study, we focused on the inverse problem, i.e., extracting the Hamiltonian, or equivalently estimating the probability, when an ensemble

$ \{\sigma^{(1)}, \sigma^{(2)}, ..., \sigma^{(M)}\} $ is known, where M is a large enough integer for an ensemble. Clearly, it is difficult to estimate the high-dimensional probability distribution using traditional methods; this is the so-called curse of dimensionality [16]. Fortunately ANNs constitute a suitable solution for this problem [25, 27]. ANNs are helpful in two aspects. First, an effective numerical model can be constructed via ANNs in a more straightforward way. In particular, based on the original ensemble, each sample$ \sigma^{(i)} $ can be reconstructed by an effective mode$ \Sigma^{(i)} $ , such as the vortex in the XY model, to obtain a correctly distributed ensemble [28]. ANNs allow for the extraction of the energy, or equivalently the probability, of$ \Sigma^{(i)} $ . Thus, a numerical interaction of the effective mode is expressed as$ H(\Sigma)=-T \ln (P(\Sigma))+C, $

(2) where the constant C corresponds to the partition function. Second, if the ensemble can be experimentally measured, even in a more macroscopic degree of freedom, a numerical Hamiltonian can be constructed by an ANN. Once this Hamiltonian is obtained, the system properties at different temperatures can be estimated by generating a new ensemble according to the Boltzmann distribution with the help of a standard sampling method. It should be noted that the temperature dependence is explicitly introduced as a physical prior for the construction of the numerical Hamiltonian using ANNs [26].

-

We further consider the quantum version of the above problem, which is based on several known ensembles at one or more temperatures to extract the action by estimating the probability density of each sample. The quantum effects can be introduced into classical statistics by either considering the Hamiltonian as a quantum operator

$ \exp(-{\hat H}/T) $ , or integrating over all possible evolution processes, which is known as the path integral approach of the quantum theory. At zero temperature, the weight of a quantum state is expressed as a complex factor,$ \exp(-i S) $ , which cannot be applied for real-valued ANNs. However, for finite temperatures, the imaginary formalism compacts the time onto a ring with radius$ \beta=T^{-1} $ of imaginary time$ \tau=i t $ . In such a formalism, the partition function is$ Z=\int D\Phi \exp(-S[\Phi]), $

(3) where the action is generally expressed as

$S[\Phi]= \int_0^\beta {\rm d}\tau {\rm d}^3x[(\partial_\tau\Phi)^2+(\nabla\Phi)^2+ V(\Phi)]$ if we only consider the usual local and Lorentz covariant Bosonic field system, for example. For fermionic cases, a proper Hubbard-Stratonovich transformation can be applied to obtain a bonsonic one. Although the temperature-dependence is more complicated than the classical case, which is${\rm e}^{-E/T}$ , it is still tractable if the discretization formalism is explicitly expressed as$ \begin{aligned}[b] S[\Phi]=\;&\sum \Delta\tau (\Delta x)^3\left[(\frac{\Delta \Phi}{\Delta \tau})^2+(\nabla\Phi)^2+V(\Phi)\right]\\ =\;&\sum (\Delta x)^3\left[\frac{(\Delta \Phi)^2}{\Delta \tau}+\Delta\tau((\nabla\Phi)^2+V(\Phi))\right]\\ =\;&\beta^{-1}K+\beta V \end{aligned}$

(4) where

$ \Delta\tau=\beta/N_\tau $ , the first term is part of the kinetic term denoted as$ K\equiv N_\tau\sum (\Delta x)^3{(\Delta \Phi)^2} $ , and the second term includes all the time-independent terms denoted as$ V\equiv N_\tau^{-1}\sum (\Delta x)^3{[(\nabla\Phi)^2+V(\Phi)]} $ . The temperature dependences of these two terms are different and separable with regard to the quantum fields. It is evident that once actions of a sample at two given temperatures are estimated from$ \begin{aligned}[b]& S_1[\Phi]=\beta_1^{-1}K[\Phi]+\beta_1 V[\Phi]+C_1\\ &S_2[\Phi]=\beta_2^{-1}K[\Phi]+\beta_2 V[\Phi]+C_2, \end{aligned} $

(5) the K and V terms can be obtained and the action at any third temperature is expressed as

$ S_3[\Phi]=\frac{\beta_1(\beta_3^2-\beta_2^2)}{\beta_3(\beta_1^2-\beta_2^2)}S_1+ \frac{\beta_2(\beta_1^2-\beta_3^2)}{\beta_3(\beta_1^2-\beta_2^2)}S_2+C_3, $

(6) where

$ C_3=\frac{\beta_1(\beta_2^2-\beta_3^2)}{\beta_3(\beta_1^2-\beta_2^2)}C_1+ \frac{\beta_2(\beta_3^2-\beta_1^2)}{\beta_3(\beta_1^2-\beta_2^2)}C_2. $

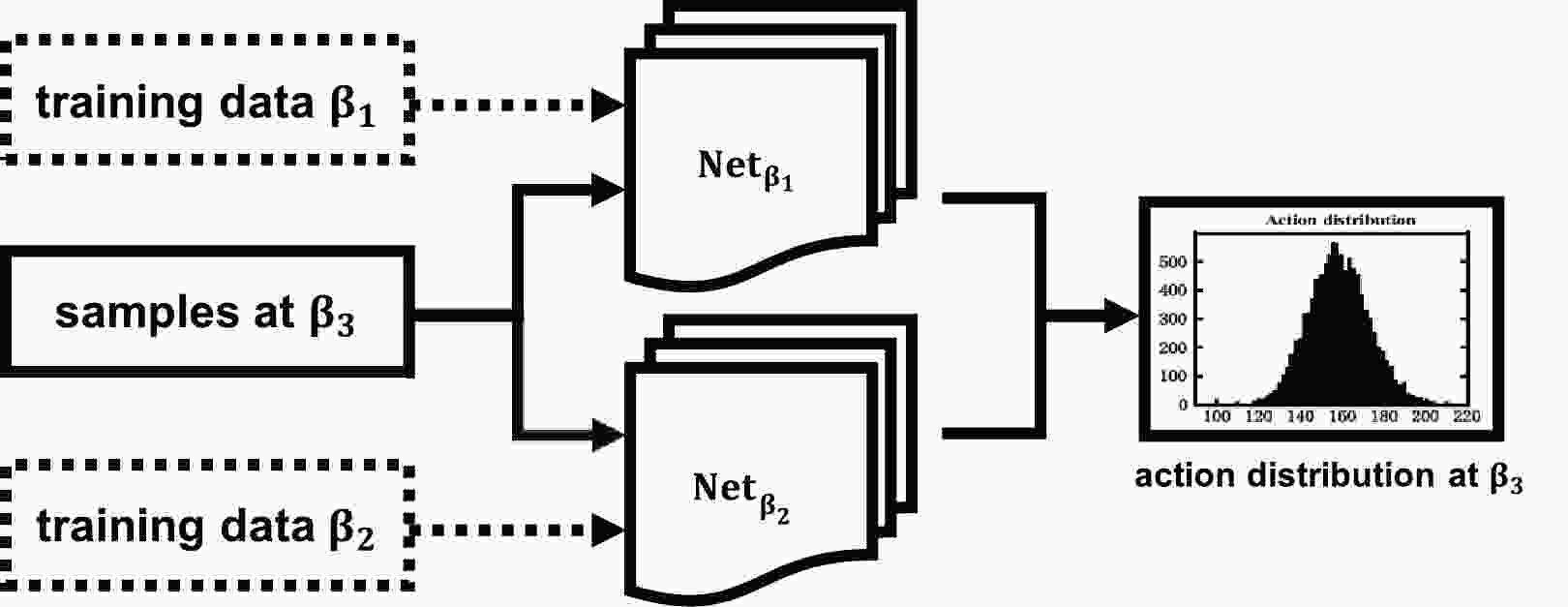

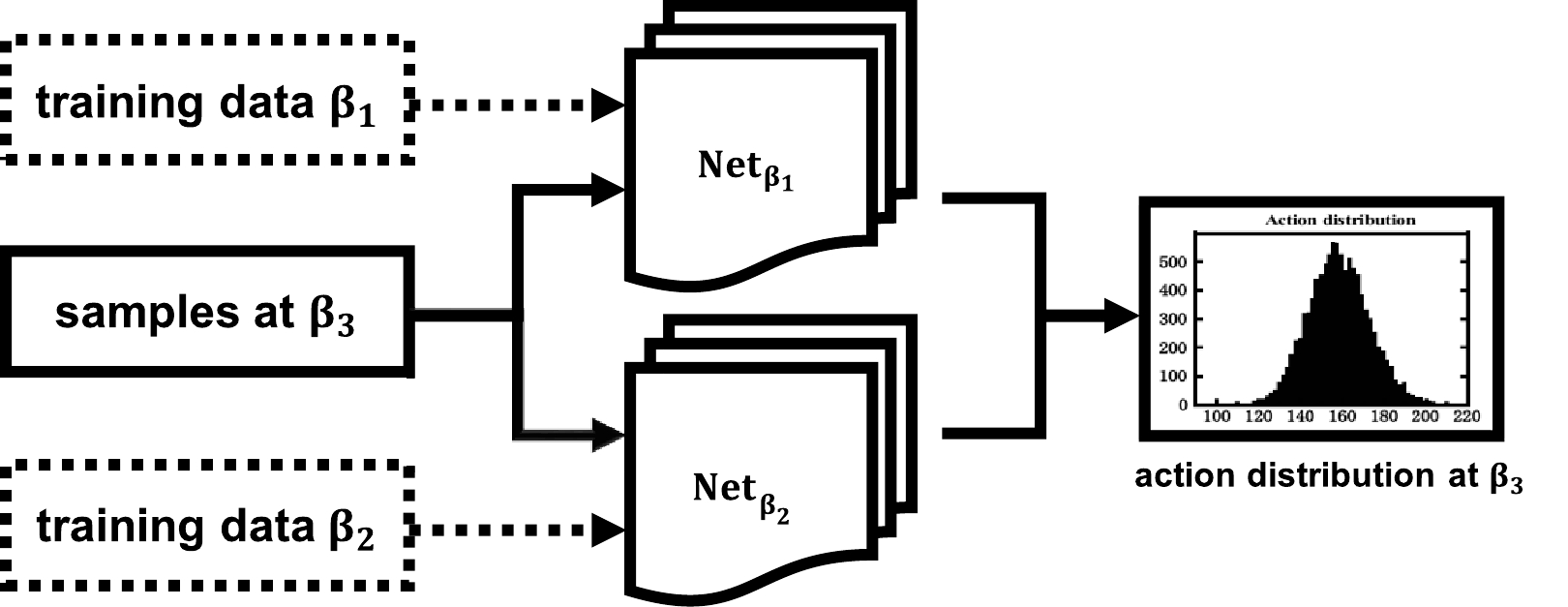

(7) Employing ANNs as the classical case twice, we can extract the probability (up to a global constant) of any sample in a correctly distributed ensemble at two given temperatures. Equivalently, the temperature-independent terms K and V can be determined by feeding two ensembles — generated via certain sampling algorithms — into ANNs. The network is then trained to output the numerical action or probability density associated with each sample. Utilizing two such ensembles at distinct temperatures allows for encoding interaction details within two respective ANNs, denoted as

$ T_1 $ and$ T_2 $ . This methodology obviates the need for an analytical expression of the Lagrangian density.A schematic flowchart is shown in Fig. 1. This quantum version of the algorithm indicates that only two ensembles are required to reconstruct the interaction details of a system. Nevertheless, in principle, the whole evolution history along the imaginary time will contribute to the quantum partition function.

-

A simple 1D quantum mechanical system is enough to conduct an experiment based on the algorithm previously described. The Lagrangian is

$ L=\frac{1}{2}\left(\frac{{\rm d}x}{{\rm d}\tau}\right)^{2}+V_{k}(x). $

(8) We introduce a standard double-well potential as

$ V_{k}(x)=\frac{\lambda_{k}}{4}\left(x^{2}-\frac{\mu_{k}^{2}}{2 \lambda_k}\right)^{2}. $

(9) Here,

$ \lambda_k $ is the coupling constant with mass dimension$ [\lambda_k] = [m]^5 $ and$ \mu_k $ is a parameter with mass dimension$ [\mu_k] = [m]^{3/2} $ . We assume that the particle mass parameter (in the kinetic energy term) is m; thus, we work with dimensionless quantities rescaled by proper powers of m (e.g.,$ \mu^2_k/m^3\rightarrow \mu^2_k $ and$ \lambda_k/m^5\rightarrow \lambda_k $ ) throughout this paper. The properties of the system at finite temperature T can be described through the partition function$ \begin{aligned}[b] Z =\;& \int _{x(\beta)=x(0)}Dx\ {\rm e}^{-S_E[x(\tau)]} =\int\prod\limits_{j=-N+1}^{N+1}\frac{{\rm d} x_{j}}{\sqrt{2\pi a}}\\ & \times\exp\left\{-\sum\limits_{i=-N+1}^{N+1}\left[\frac{(x_{i+1}-x_{i})^{2}}{2a}+a{V_k(x_{i})}\right]\right\}, \end{aligned}$

(10) where

$ k_B = \hbar = 1 $ ,$ \beta = 1/T $ is the inverse of temperature T, and$ S_E $ is the Euclidean action in the imaginary formalism given by$ \begin{aligned}[b] S_{E}[x(\tau)]=\;&\int_{0}^{\beta}{\rm d}\tau\ \mathcal{L}_{E}[x(\tau)]\\ =\;&\int_{0}^{\beta}{\rm d}\tau\ \left[\frac{1}{2}\left(\frac{{\rm d}x}{{\rm d}\tau}\right)^{2}+V_{k}(x)\right] . \end{aligned} $

(11) When the interaction coupling is not too large, the system is governed by the semi-classical solutions of the equation of motion. To derive a non-trivial semi-classical solution in the 0+1-dimensional field system explicitly, we can consider the 1D quantum mechanical system with Higgs-like interaction potential. This is a popular potential to realize spontaneous symmetry breaking [32, 34, 35] in a higher dimensional system, while for the 1D case, such a mechanism does not exist. Instead, for such a system, a type of tunneling solution known as kink is a close analog of the instanton in QCD.

The semi-classical solution is obtained by minimizing the action and solving the equation of motion. There are also two nontrivial solutions given by

$ x(\tau) = \pm\frac{\mu_k}{\sqrt{\lambda_k}} \tanh \left[\frac{\mu_{k}}{\sqrt{2}}(\tau -\tau_0)\right]. $

(12) These solutions approach

$ \pm \mu_k/\sqrt{\lambda_k} $ at$ \tau=\pm \infty $ . This means that these two solutions interpolate between two minima over infinitely long imaginary time. Such a behavior is consistent with the ground state of the Schrodinger equation, which is supposed to reach a maximum at the middle of the two minima of the potential. The above solutions, with plus and minus signs, are called the kink and anti-kink solutions, respectively, and represent the tunneling process if one transforms the solution back to the real-time formulation.For numerical simulation, as suggested in Ref. [36], we set

$ \lambda_k=4 $ and$ \mu_{k}/\sqrt{\lambda_{k}}=1.4 $ and adopted the traditional MCMC for sampling at different temperatures [36]. We used an ANN to estimate the probability of each sample and reconstruct the Lagrangian density using two ensembles. To apply MCMC sampling, the continuous variable$ x(\tau) $ must be first discretized. A smaller lattice size certainly requires a larger number of steps before convergence is achieved. As a practical example, in this study, the simulations for different temperatures ($ \beta = T^{-1} = $ 80, 40, 20) were performed with the same lattice size (256) and number of sweeps ($ N_{MC} = 5 \times 10^6 $ ). After discarding the first 50,000 steps, we chose 10,000 configurations randomly in the following sequence as the training set and another 10,000 samples as the testing set for each β. -

CAN is a suitable neural network for extracting the probability density for each sample with continuous d.o.f [20, 25]. In this study, we adopted this neural network to learn the probability density of each sample in quantum systems. The algorithm is constructed according to the Maximum Likelihood Estimate (MLE), which is employed to estimate the probability density in an unsupervised manner [37]. Two basic properties of probability must be satisfied. First, the given probability is positive. Second, two similar configurations yield similar and continuous values of probability. To fulfill both properties in a CAN, we propose factorizing the whole probability of a sample as the product of conditional probabilities at each site and using an appropriate mixture of Beta distributions as the prior probability to ensure positive and continuous requirements. The Beta distribution

$ {\mathcal B}(a, b) $ , with two parameters a and b, is defined as continuous within a finite interval. Therefore, the output layers of the neural network are designed to have two channels for each parameter, and the conditional probabilities at each site are expressed as [38]$ f_{\theta}(s_{i}|s_{1}, \cdots, s_{i- 1})=\frac{\Gamma(a_{i}+b_{i})}{\Gamma(a_{i})\Gamma(b_{i})}s_{i}^{a_{i}-1}(1-s_{i})^{b_{i}-1}, $

(13) where

$ \Gamma(a) $ is the gamma function,$ \{\theta\} $ is a set of trainable parameters of the networks, and$ s_i $ is the configuration of the system. The outputs of the hidden layers are$ a \equiv (a1, a2, ...) $ and$ b \equiv (b1, b2, ... ) $ . The conditional probability is realized by adding a mask which veils all the sites whose indices are larger than a given position before one sample is conveyed to the convolutional layers. This setup is consistent with the locality of microscopic interactions and is capable of preserving any high-order interactions from a restricted Boltzmann machine perspective [39]. With such a conditional probability Ansatz, the joint probability of a sample is expressed as$ q_{\theta}(s)=\prod\limits_{i = 1}^{N}f_{\theta}(s_{i}|s_{1}, \cdots, s_{i-1}). $

(14) The loss function in training is designed by maximizing the probability of the ensemble (training set) according to the MLE principle, i.e., the most physical ensemble is the one with the greatest likelihood to emerge. Hence, the loss is calculated as the logarithmic value of the mixture distribution obtained from the network,

$ L=-\sum\limits_{s\sim q_(data)}\log(q_{\theta}(s)) $

(15) and an Adam optimizer is used to minimize this loss. Within this framework, the problem of density estimation is converted to find

$ \{a_i\} $ and$ \{b_i\} $ for each site that works for all samples in the ensemble. In this way, we do not have to determine different (unknown) probabilities for each sample; we simply obtain the positivity and similarity of the sample probability. The only Ansatz is the method of factorizing the whole probability of a sample. It works better if the explicit form of the conditional probability (Beta distribution in this study) is chosen to achieve a better expression ability.In an unsupervised manner, the training process is equivalent to find the correct probability of each sample. The required training data comprise only two physically distributed ensembles at different temperatures and no analytical Lagrangian density expressions are required by the CAN. These training data are obtained via traditional MCMC simulations [33].

-

In this section, we report on the training and validation stages of CANs. CANs succeed when it comes to reproducing the correct action of most configurations at different temperatures without knowing the analytical form of the Lagrangian density (in an unsupervised manner). To train the network, we prepared three ensembles at

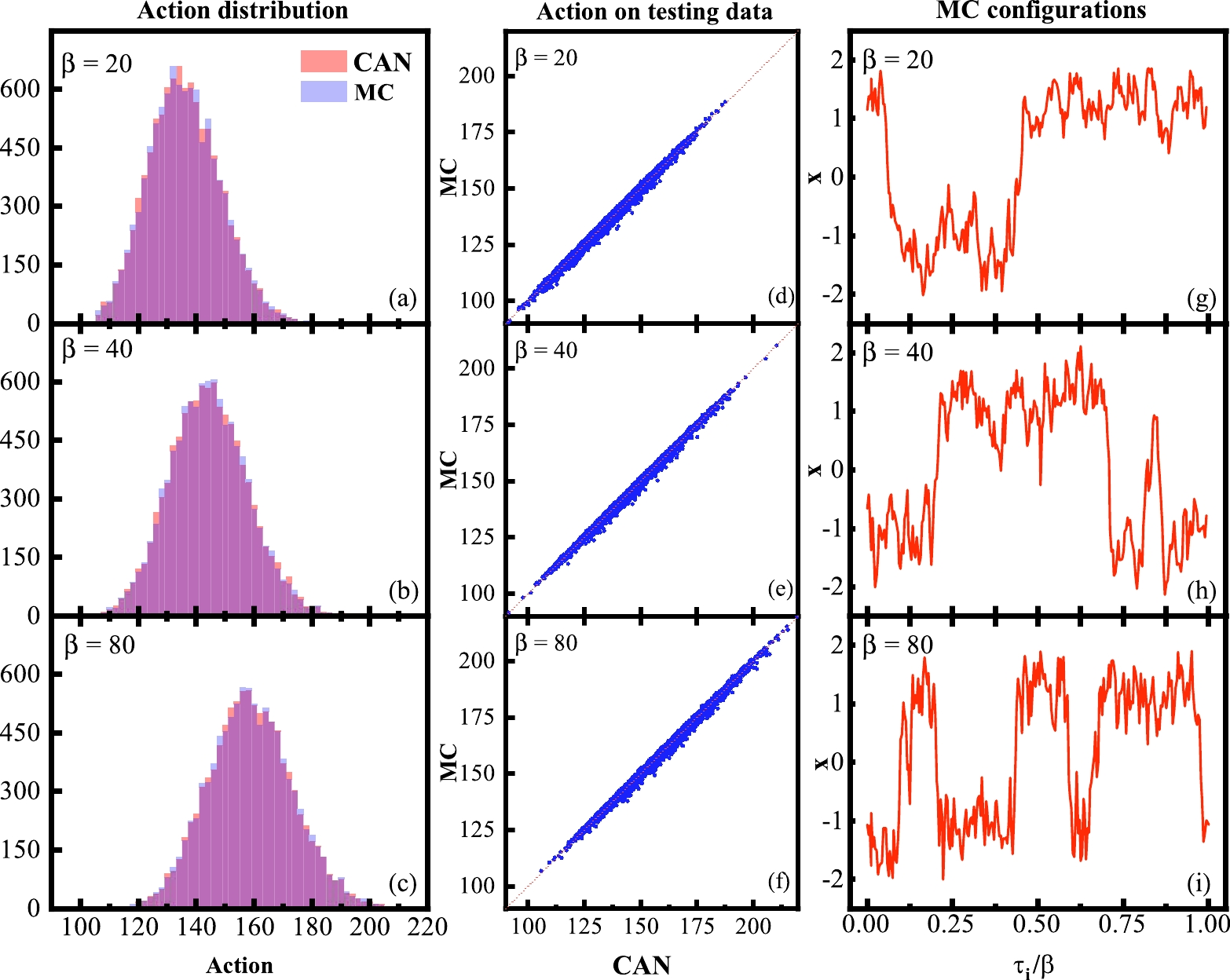

$ \beta=T^{-1}= $ 20, 40, and 80 through MCMC simulation. We trained the CANs to estimate the probability of each sample in these three ensembles over 10,000 epochs. Fig. 2 shows that convergence is the fastest for$ \beta=40 $ , slower for$ \beta=80 $ , and the slowest for$ \beta=80 $ . This means that the network is good at learning the ensemble with different types of configurations because both the kinetic and potential terms will contribute to the averaged loss equally. The two limit cases are more difficult to learn because the network will focus on the kinetic/potential part. As a result, the loss from the other part will be more difficult to reduce. Nevertheless, with a large enough number of epochs (larger than 2000), all the networks converged.The CANs provide a good estimate of the probability, as shown in Fig. 3. The first column shows the histograms of both the analytical (blue) and CAN output (red) actions of the test data. Given that the constant in Eq. (6) cannot be determined, we shifted the CAN histograms manually to set their peaks at the same position. The same shifting constant was adopted for further comparison. It is clear that they mostly coincide with each other. To demonstrate the estimation ability, a sample-wise comparison of these two actions is shown in the second column. Up to the above-mentioned constant, the two actions fall on a straight line whose slope equals 1. This means that the CANs can not only reproduce the correct distribution (histogram) of action for a given ensemble but, more importantly, the correct action for each sample. The third column shows typical configurations in the ensembles at each temperature. At larger β (low temperature), the configuration seems to be a link of more multi kinks and anti kinks. When temperature increases, the kinks and anti-kinks reduce in number and eventually vanish. This behavior agrees with our expectations and indicates that the ensembles distribute correctly. From these results, it is clearly demonstrated that CANs have the capacity to learn the possibility distribution at one single temperature. In this study, these networks successfully learned most of the actions of the samples up to a network-dependent constant without introducing the analytical Lagrangian density into the CANs.

Figure 3. (color online) (a)−(c) Comparisons of action distribution by MCMC (analytical) and CANs; (d)−(f) Comparisons of action on testing data by MC and CANs; (g)−(i) Monte Carlo configuration

$ {x_i} $ for$ \beta= $ 20, 40, and 80, respectively.Once two networks, corresponding to two different temperatures, were trained, the action of each sample in the third ensemble at a different temperature was extracted using Eq. (6) up to a sample-independent but network dependent constant. In the next section, we use networks trained at two temperatures of the three cases mentioned above to predict the sample actions at the third temperature.

-

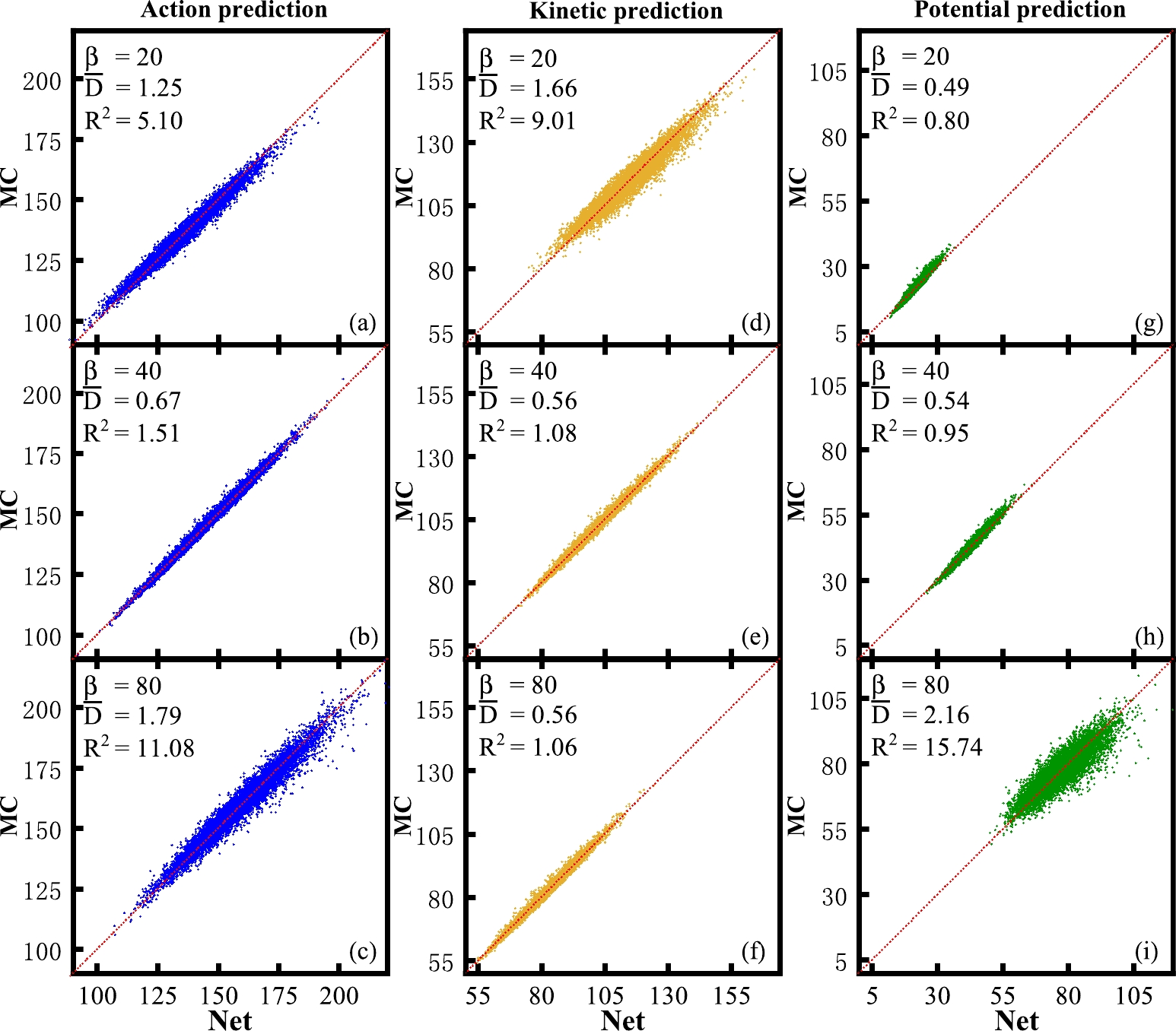

Once the networks are trained, it is easy to estimate actions from those at two other temperatures. Assuming that neither the network nor the analytical Lagrangian density at

$ \beta_3 $ are known, we searched for the action of an arbitrary sample at$ \beta_3 $ by making use of two trained neural networks at$ \beta_1 $ and$ \beta_2 $ according to the procedure presented in Sec. III using Eq. (6). Given that we had already obtained three networks at three temperatures, we selected any two of them, e.g.,$ \beta_1 $ and$ \beta_2 $ , to predict the remaining one, i.e.,$ \beta_3 $ , whose network became idle. The predicted total action, kinetic part (proportional to$ \beta^{-1} $ ), and potential part (proportional to β) of the 2-to-1 prediction and analytical actions are shown in the first, second, and third columns, respectively, in Fig. 4. Clearly, the first ($ \beta_{40, 80} $ to$ \beta_{20} $ ) and last ($ \beta_{20, 40} $ to$ \beta_{80} $ ) rows are extrapolation cases, whereas the second row ($ \beta_{20, 80} $ to$ \beta_{40} $ ) corresponds to interpolation. In these figures, we list two quantities, namely$ R^2 $ and$ \bar{D} $ , to show the prediction ability of the CANs.$ R^2 $ is the mean value of the square of the predicting error$R^2=\langle{(S_{\rm True}-S_{\rm CANs})^2}\rangle$ whereas$ \bar{D} $ is the mean of the distance to the red line with slope 1. We can see that the interpolation case, i.e.,$ \beta_{20, 80} $ to$ \beta_{40} $ , performs the best.

Figure 4. (color online) (a)−(c) Comparisons of action on testing data by MC and prediction network; (d)−(f) Same comparison for the kinetic part. (g)−(i) Same comparison for the potential part.

The reason for this emerges from the observation of the kinetic and potential part distributions. In the

$ \beta_{40, 80} $ to$ \beta_{20} $ case (first row), the$ \beta_{40} $ and$ \beta_{80} $ ensembles are more dominated by multi-kink and anti-kink configurations whose kinetic parts are relatively larger because of the larger derivative from the jump, i.e.,$ \pm 2 $ to$ \mp 2 $ in our computations. However, predicted ensembles$ \beta_{20} $ have less kinks/anti-kinks, which makes the kinetic part estimation not good enough. In the$ \beta_{20, 40} $ to$ \beta_{80} $ case (third row), the$ \beta_{80} $ ensemble has more multi-kinks/anti-kinks, i.e., more site values equal to$ \pm 2 $ , while in$ \beta_{20, 40} $ ensembles, more absolute site values are less than 2. This makes the potential part estimation not good enough. In the interpolation case, the training data include ensembles both at low and high temperatures, which makes the training data cover more different configurations. Therefore, the kinetic and potential parts both work well in the$ \beta_{20, 80} $ to$ \beta_{40} $ case. Physically, the low and high temperatures ensembles typically correspond to distinct phases of the system. Once the network has assimilated the information, it is equipped to predict the system's behavior at any intermediate temperature stage. This capability is crucial for detailed exploration of the phase diagram. -

In this study, we propose a paradigm for constructing an effective model using ANNs once an ensemble of a certain d.o.f has been obtained. Utilizing CANs and a Higgs-like 0+1D quantum field model, we demonstrate the construction process. For this model, there is a topological phase transition from a state dominated by kinks and anti-kinks to a state without kinks as the temperature increases from low to high. Using ensembles generated by the traditional Markov Chain Monte Carlo (MCMC), the CANs successfully extracted the probability of each sample. By utilizing two trained networks at different temperatures, the action of a sample at an arbitrary third temperature was easily determined using Eq. (6). As expected, the predictions were most accurate when interpolating. This approach is beneficial for constructing a new effective model targeting specific d.o.f once the ensembles of the fundamental

$d.o.f $ have been established.This novel paradigm is particularly effective for investigating phase transitions, e.g., deconfinement, distinguishing it from other applications of supervised learning applications [30, 40, 41]. Additionally, it is more user-friendly compared to existing unsupervised methods [42, 43]. For instance, let us consider Quantum Chromodynamics (QCD) at finite temperature. It is suggested that around the critical temperature for deconfinement, a soliton solution of gluons, known as a dyon, dominates the system. With complicated computations, the gluon-quark system governed by QCD can be converted into a dyon-quark ensemble, wherein dyons interact in intricate ways. Although such a dyon ensemble has been obtained analytically after extensive efforts [44], further calculations regarding physical observables still rely on numerical simulation. Consequently, employing the methodology described in this paper will be advantageous for developing an effective model using ANNs.

If the ensemble of gluon configurations can be obtained through lattice simulation, it is possible to first transform the fundamental gluon field into multiple dyons and anti-dyons in space, thereby converting the gluon ensemble into a dyon ensemble. Subsequently, an effective numerical model can be developed by utilizing these dyon ensembles with the methodology described in this paper. As previously suggested, the numerical model must comprise two trained networks. At any given temperature, the action at a third temperature can be calculated. This action can then be utilized as if it were derived from the analytical dyon ensemble model. The same procedure can be applied to any effective d.o.f of various systems.

Building imaginary-time thermal field theory with artificial neural networks

- Received Date: 2024-05-12

- Available Online: 2024-10-15

Abstract: In this paper, we introduce a novel approach in quantum field theories to estimate actions using artificial neural networks (ANNs). The actions are estimated by learning system configurations governed by the Boltzmann factor,

Abstract

Abstract HTML

HTML Reference

Reference Related

Related PDF

PDF

DownLoad:

DownLoad: